The Warm Fuzzy Feeling You Get With Full Test Coverage

I am writing this blog specifically for PMs/POs/Founders who are non-devs.

Specifically for people who sometimes struggle to understand developers:

- What does a developer actually say, when they want “more time for tests”?

- Why do we have to pay so much for CI minutes?

- How can writing tests take longer than the feature itself?

Yet, there are good reasons for it.

Here are the 7 perks that projects experience when there’s full test coverage.

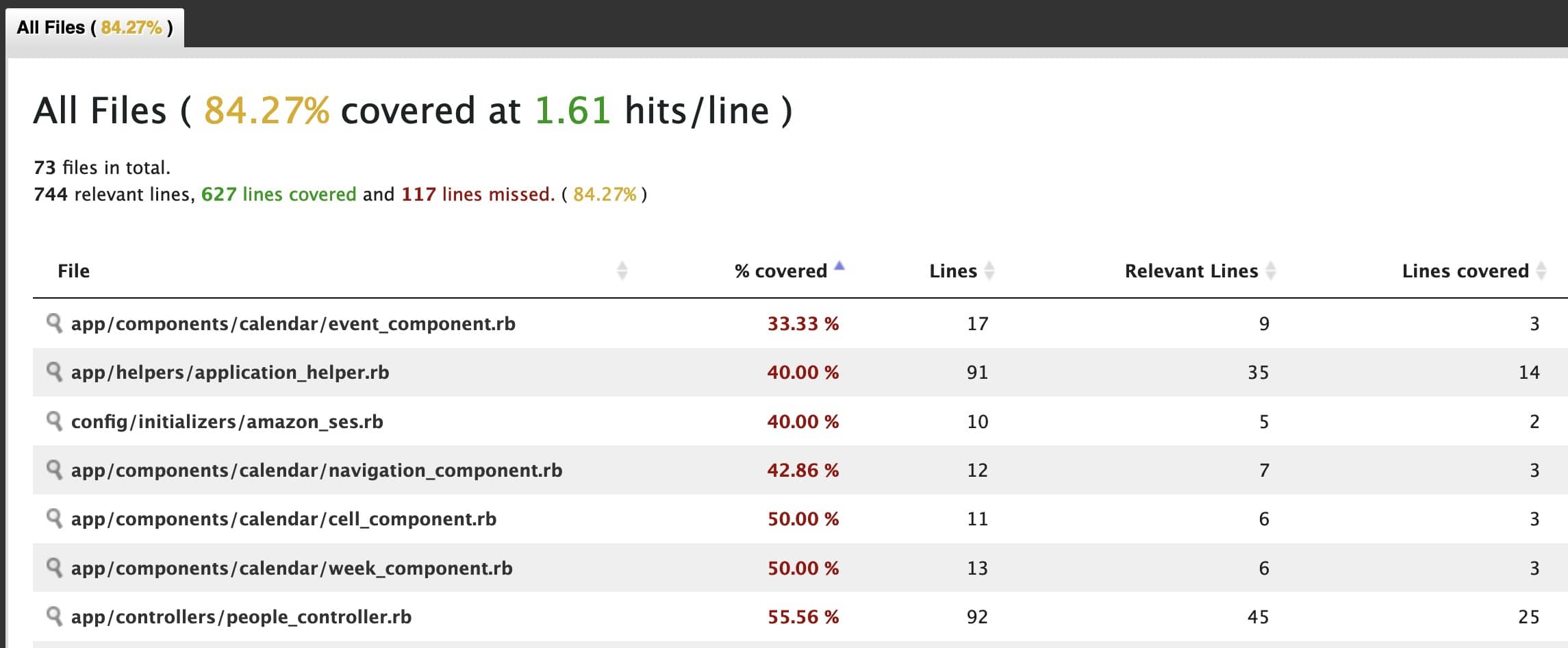

Before we start: developers know that full test coverage only means “you’ve written a sufficient amount of tests to cover every line of code in your application.”. Technically, you can write full test coverage and still missing a lot of things you should be testing.

However, for the sake of this article, let’s assume you are actually testing everything according to best practices, and your developers tell you they have thought of everything and are satisfied.

#1 Refactoring will be super easy

Quick recap:

Refactoring is the process of modifying existing code to improve its readability, performance, or maintainability, without changing its behavior.

Refactoring always happens, especially when systems grow. And it’s crucial if you want to cut down the technical debt.

Now, it also can be a daunting task, especially in a large codebase. You might have heard this phrase from Bill Gates:

Never change a running system.

That was true many years ago, when software was still rare in many industries and there was not much competition around SaaS. You might disagree with me, but I’d love it if Microsoft would refactor Teams a bit more.

With 100% test coverage, refactoring becomes way easier. Because the tests normally don’t need to change (okay, except unit tests). Feature and integration tests will likely stay the same, and the developers can rerun them and see if the code is still doing what it’s supposed to do.

Changes can be made with the confidence that they will not break existing functionality. Automated tests will catch any regressions introduced during the refactoring process.

#2 You are able to build big systems

I had a conversation with someone on linkedin:

Our biggest problem? We face some stability issues, as our test coverage is somewhat low (around 30%). The FE is built with TypeScript, which seems more durable.

Side note: I’ll write about this in another post. Just a small refresher: Ruby does not have a type system built-in, so tests are even more important.

As the project grows in size and complexity, it becomes increasingly difficult to ensure that changes won’t introduce new bugs or regressions into the codebase.

Regressions are per definition:

is a type of software bug where a feature that has worked before stops working.

Automated tests can help fill this gap, allowing developers to test the system more thoroughly and catch potential issues before they become problems.

With 100% test coverage, developers can build big systems with confidence. They can be sure that their changes will not break existing functionality, and automated tests will catch any regressions.

There’s more to it: as the system grows, there will be less and less people who understand it in whole. Tests will bridge some of this gap. Devs can rely on tests to tell them if the system still works when all parts are connected.

#3 You can gain confidence handling millions of users

One of the challenges of building large systems is the ability to handle a large number of users. With a 100% test coverage, developers can gain confidence in the system’s ability to handle millions of users.

It’s obvious, but let me explain it a bit more:

With lots of users, comes lots of variations. Those might be small changes in input data. Let’s say there’s a certain method that’s only called in 0.1% of the cases. This feature might not even get called by 100 users. Only 1 in 1000 users might hit that method, and you might not even see it in the logs.

With 100% test coverage, your developers have ensured that it gets tested before going into production.

Automated tests can simulate a large number of users and catch any performance issues before they become problems. This level of confidence is essential, especially for mission-critical applications.

#4 It will still break, but less likely

Although having a 100% test coverage provides a safety net for code changes and modifications, it does not guarantee that the code is bug-free or perfect. Bugs can still exist in the code, and it is still possible to introduce new bugs.

However, with a 100% test coverage, the likelihood of breaking the code is less.

Code breaking is now getting limited to very few special cases.

Some examples:

- Integration tests have been thorough but edge cases were missing

- External APIs deliver inconsistent data and your system hasn’t dealt with all eventualities

- Race conditions appear from users operating on the same data at the tame time

Those problems will always be there, even with 100% test coverage. But at least you will have to deal with these hard bugs, not with obvious errors that could have been prevented by a great test coverage.

#5 Developers don’t fear to be blamed

Imagine you don’t have good test coverage. Your stakeholders push the project, they want to meet deadlines, and you want to support them.

- You start to test more and more manually.

- Every time you deploy, you go through everything on staging.

(I’m actually getting chills imagining this situation)

And yet, something breaks. It’s a feature your developer worked on.

Now, most developers are rational.

They will see the exact line that causes the issue and will blame themselves. Their fun at work might take a small hit.

Now, what if you have great test coverage?

Things will still break, but every merge/pull request will come with solid tests. The tests are run on the continuous integration (CI) system. If something breaks now, the developer can at least say that they ticked all the boxes. They won’t fear to be blamed every time they submit a change to the code base.

#6 code reviews become easy

People who haven’t read many tests might not know this:

Tests help to specify a software. And this is great in code reviews, because the reviewer can check the ticket definition, look at the test, and figure out if the specification was met.

With 100% test coverage, code reviews become easy. Reviewers can trust that if the tests match the content of the ticket, and the code passes the CI pipeline, then the code is probably correct.

The subsequent read of the code is still necessary, but can be focused on best practices, conciseness, and performance. As the tests pass, the code robustness and correct functioning is already clear.

An interesting thing I learned from Adrien Guéret:

A coverage set below 100% leads to two things:

- loss of information;

- less accountability.

To me, this reflects exactly the broken window theory - once you accept less than 100% code coverage, the next code changes slowly deteriorate, and then the next one. I have seen this in my own code when working on personal projects. It’s not a good feeling you want to hand over to your team.

#7 you can be sure that old features will work indefinitely

Software is never “done”. There are always things changing, dependencies updated, or security patches necessary to work in. Software is also never perfect, so there’s always change.

As long as a project gets used, it’s modified.

If your project has great test coverage, your team can work on new features with ease. If they break old ones, they will see what broke and can fix it immediately.

Imagine manually testing old features. It’s almost impossible to remember old use cases. It just doesn’t work. But with full test coverage, you can be sure that the old code still works.

Automated tests will catch any regressions introduced during the modification process. This makes it easier to maintain and update old features.

Conclusion

I wrote this for people to use as an argument next time they face a stakeholder who wants to do things “the quick way”. Yes, there is an 80/20 approach which I do promote, but for critical software that absolutely needs to work, there’s no replacement for 100% coverage.