The 80/20 of testing

February 16, 2023

With testing you can fail both ways:

- Write too many tests: you'll lose too much time - Time you need to market your startup;

- Write too few tests: refactoring becomes harder and harder, causing unhappy developers: they touch a part of the code to break another.

This is especially true for Ruby on Rails, which doesn't have type safety. Additionally, Rails comes with a lot of dynamic methods that are easily misspelled and nobody notices until a test is run.

So, just test manually?

Yes, there's the argument to not write tests below a certain revenue number:

Claiming everything must be polished, tested and documented is the worst form of procrastination.

While this sounds good in practice, there are a couple of problems: especially when writing code for APIs, you want to have an automated system to run the test and not rely on the API. In those cases, tests will actually save you time!

Without tests (especially automated browser tests), shipping code becomes harder. As gregors pointed out on hacker news (HN):

success in shipping code that works and finding and fixing bugs takes considerably less time when there are at least some automated tests available.

So, tests will save time?!

Yes, but only if you don't overdo it. Confused? worry not!

In this post I'll explain to you the testing approach my company adopts for new projects. I'll also show you which tests are necessary and which ones you can skip without ending up with a bug-infested system.

The 80/20 testing approach

- ✅ Integration tests

- ✅ Feature / frontend tests

- ❌ Job specs

- ❌ Unit testst

- ❌ Mailer specs

- ❌ Request tests

- ❌ Routing Specs

- ❌ Helper tests

- ❌ View tests

The 2 essential test types take care of the rest

If you are not familiar with the Pareto principle (or the 80/20 rule as Richard Koch calls it), the basic idea is this:

- 20% of the actions you take result in 80% of the outcomes and,

- 80% of the actions have minimal impact and in most cases, these actions should not be performed.

➡️ For software testing, this means 20% of the tests can cover 80% of our code.

How can we do that?

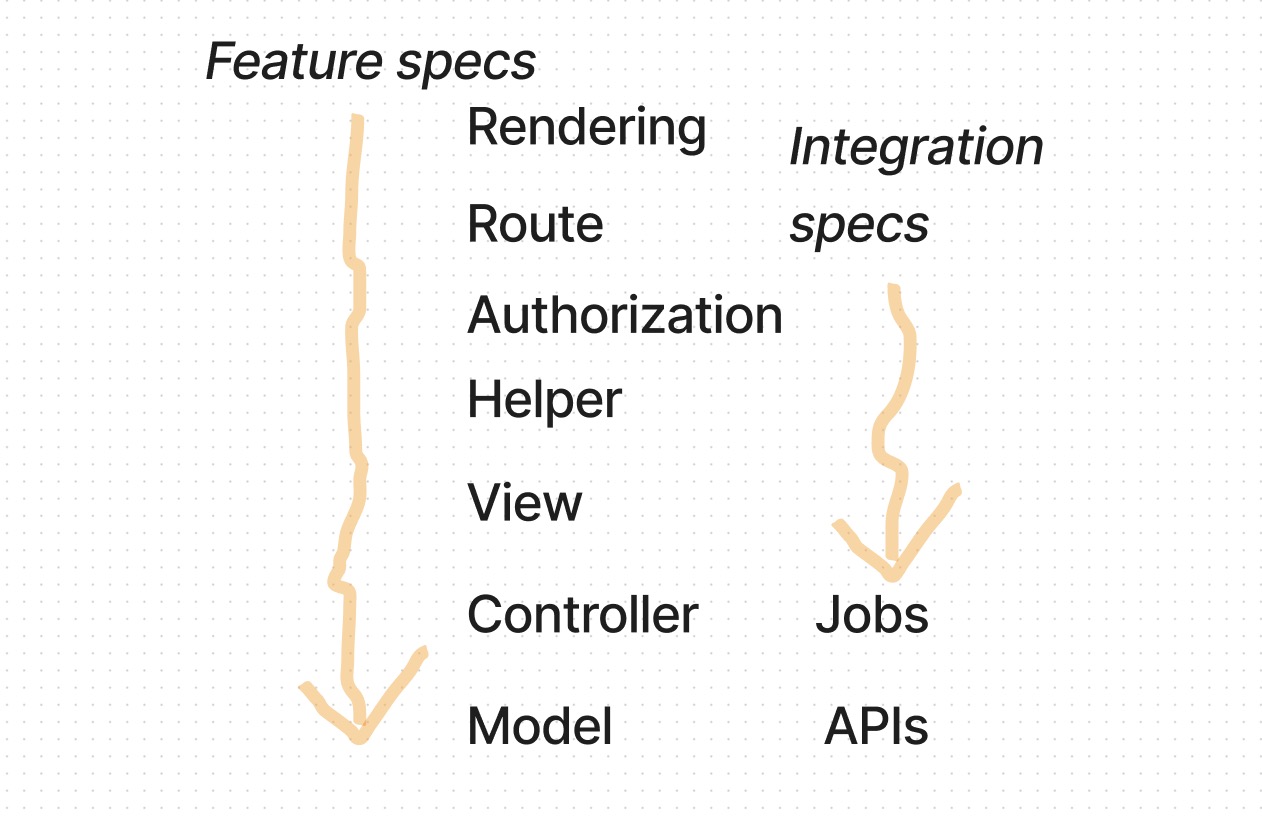

By not testing each layer, but testing deep into the stack.

Feature specs FTW

Either you're writing tests or you're... manual testing.

Everyone who skips tests should keep this in mind (good line from the above mentioned comment on HN). There always needs to be testing: either it's your code, or it's someone doing it manually.

The one thing that makes feature specs stand out is this: it's like someone is clicking through the application, but then also testing data directly on the database.

Here is an example from a recent hotwire app I built, which has events and attendances:

- The user logs in: Test authentication

- The user is admin and can change events: Test authorization

- They create an event by clicking the + button: Test the route and view

- The event has a location: Test the model

- The user adds an attendee: Test the view, helper, request and response

- The attending person gets an email: Test the emails

Integration tests: For everything invisible

Let's stick with the previous example: there are things happening in our application that are unrelated to user interaction. That is, when the user does something, there is no direct visible response.

Examples: API call and/or job.

For those things we cannot easily use feature tests, it is much better if we use integration test, defined as:

A phase in software testing where individual software modules are combined and tested as a group.

Following on our previous example, the system sends SMS and emails on the morning of the event for each attendee.

- An event was created last week, with the starting time of today at 1pm. We use factory_bot here as the industry standard.

- From the test setup, we run the job that gets scheduled every morning: (tests the job running)

- The user gets an email: (tests emails, again)

- The system hits the Twilio API to send an SMS, if the user has a number (tests the api and its response, also tests the model)

Can we really skip unit tests?

In most cases: yes, you can. We still write lots of unit tests for our clients because: a) The components are involving money and can absolutely not fail and, b) These systems don't even want to apply the 80/20 rule of testing. And that's fine.

There's also another case which require tests: test-driven development (TDD).

The TDD methodology prioritizes creating tests above producing code. It starts with creating a test for a specific piece of code, then creating the code to pass the test until all tests pass and the code is finished.

- When writing certain code, the writing itself can be faster when starting with tests.

Unit Tests: Only for TDD!

Our hacker news thread gave us another perspective:

I was working on library that had to do a lot of vector math [...] What I didn't expect is that the tests gave me so much confidence to improve, extend and refactor the code.

Here it totally made sense. Using unit tests to think about a problem, define the outcome first, and then code it out. This speeds up the development process and also ensures stability later on.

Also, it's 2023 now: you could easily write a unit test for a function and then throw it into ChatGPT and see if it comes up with an implementation already. Of course, these results need to get checked thoroughly, but you may save some time here.

What if you don't do TDD?

Then there's a good chance unit tests will just confirm what you already write. Which means, you only test for the things you already expect. And that can lead to unexpected results, as described in this opinion, which I have already seen in bigger projects:

teams start investing in unit testing and they noticeably slow down and ship more bugs at the same time.

Unit tests by itself don't help you understand a full system. Then it looks like you have test coverage but in reality you test trivial things. That's exactly the bad spot we need to avoid: fooling ourselves.

With this 80/20 approach you keep the code base smaller and still feel secure that it does what it should.

Finally, yeah, by all means, write some unit tests. But do it the smart way and apply TDD.

What's your experience with testing? I'd love it if you let me know!

Photo by girlwithredhat on Unsplash